Quantum computing is not a future magic bullet; it’s a present-day paradigm shift requiring strategists to reframe their most complex optimization problems away from finding “good enough” answers.

- It fundamentally alters how solutions are found, exploring every possibility simultaneously rather than iterating sequentially.

- The real barrier to adoption is not just hardware, but data strategy, security, and talent readiness.

Recommendation: Begin by identifying the NP-hard problems in your operations and evaluating your data’s “quantum-readiness,” focusing on security and accessibility.

For decades, logistics has been a game of ever-smarter approximations. Faced with computationally impossible challenges like the Traveling Salesman Problem (TSP) on a global scale, strategists have relied on sophisticated heuristics—brilliant shortcuts that find good, fast, but ultimately imperfect solutions. We operate under a “heuristic ceiling,” where the sheer complexity of optimizing thousands of variables in real-time means the provably best solution is always out of reach. This forces companies to accept costly inefficiencies as a fundamental law of operations. Today, the conversation is full of buzzwords like AI and machine learning, which are powerful but still operate within the same classical computing framework.

But what if the very nature of computation could be rewritten? The emergence of quantum computing is not just another incremental improvement; it’s a fundamental break from the past. This isn’t about making our current computers faster. It is a completely new model of processing information, one that allows us to tackle problems previously deemed unsolvable. My angle as a researcher is clear: the most critical task for a logistics strategist today is not to wait for a “quantum-ready” button, but to begin rethinking the very architecture of their optimization challenges. The breakthroughs are happening now, and as a study shows, the global quantum computing market is projected to reach $12.62 billion by 2032, signaling a rapid transition from lab to live environment.

This article moves beyond the hype to provide a grounded, strategic framework. We will explore the fundamental principles that give quantum systems their power, assess the real-world signs of its impending disruption, and outline the practical steps and strategic pivots required to prepare. The goal is to equip you to move from being a passive observer to an active architect of your company’s quantum future, starting with the very problems you face today.

This in-depth analysis is structured to guide you from the foundational concepts of quantum advantage to the strategic imperatives for your business. The following sections will provide a clear roadmap for preparing for this computational revolution.

Summary: Preparing for the Next Leap in Logistics Optimization

- Why Qubits Calculate Exponentially Faster Than Classical Bits for Optimization?

- How to Determine If Your Industry Will Be Disrupted by Quantum Before 2030?

- IBM Q or Google Sycamore: Which Quantum Cloud Service Suits Research?

- The Cryogenic Constraint That Keeps Quantum Computers Out of Offices

- When to Hire Quantum Developers: Signals That the Tech Is Mature Enough

- Why Electric Motors Deliver Peak Torque at Zero RPM?

- Why Generative Models Predict Demand Better Than Traditional Statistics?

- How Quantum Computing Threatens Current Complex Cryptographic Problems?

Why Qubits Calculate Exponentially Faster Than Classical Bits for Optimization?

To understand the quantum leap, we must discard the simple binary logic of classical computing. A classical bit is a switch, either a 0 or a 1. A quantum bit, or qubit, leverages the principles of quantum mechanics—specifically superposition and entanglement. Superposition allows a qubit to exist as both 0 and 1 simultaneously, in a spectrum of possibilities. With just two qubits, you can represent four states at once (00, 01, 10, 11). With 300 qubits, you can represent more states than there are atoms in the known universe. This exponential growth in information density is the source of quantum’s power.

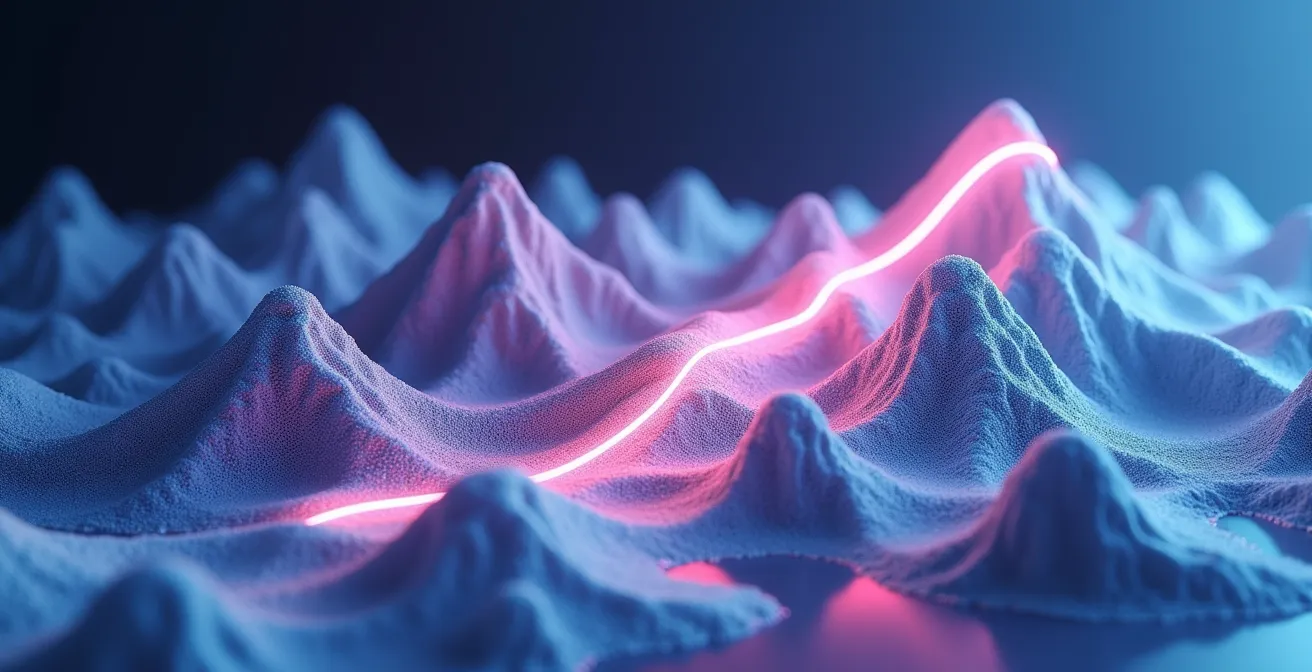

For a logistics problem like the TSP, a classical computer must test routes one by one—a sequential, brute-force process. It climbs and descends a vast “computational landscape” of possible solutions, trying to find the lowest valley representing the optimal route. A quantum computer, through a phenomenon called quantum tunneling, can survey the entire landscape at once. It doesn’t need to climb over every mountain; it can tunnel through them to find the global minimum, or the provably optimal solution, exponentially faster.

Case Study: Google’s Quantum Supremacy

A prime example of this power is Google’s Sycamore processor. In a landmark demonstration, Sycamore completed a complex calculation in mere seconds. According to analysis, the world’s most powerful classical supercomputer at the time, Frontier, would have taken over 47 years to complete the same task. While this specific problem was designed to showcase quantum advantage, it proves the underlying principle: for certain classes of problems, particularly optimization, the speed difference is not incremental; it is transformational.

This visualization helps conceptualize the difference. The classical path is long and arduous, while the quantum path finds a direct shortcut through the problem space.

This ability to explore a vast solution space simultaneously is why quantum computing is perfectly suited for NP-hard problems that plague logistics. It’s not just about doing things faster; it’s about finding answers that were previously impossible to calculate, breaking through the heuristic ceiling to achieve true optimization.

How to Determine If Your Industry Will Be Disrupted by Quantum Before 2030?

The question is no longer *if* quantum will disrupt logistics, but *when* and *how*. For a strategist, waiting for the technology to be perfect is a losing game. The key is to assess your organization’s specific vulnerability and opportunity profile *now*. The timeline is accelerating; a McKinsey study found that 65% of respondents now believe fault-tolerant quantum computing will be achieved by 2030. This belief is driving early adoption and pilot programs. Your assessment should not be based on qubit counts, but on the nature of your core business problems.

The primary indicator is your reliance on solving NP-hard optimization problems. If your daily operations involve vehicle routing, container loading (bin packing), fleet management, or complex supply chain scheduling, you are in the quantum crosshairs. These are the exact types of problems where quantum algorithms like the Quantum Approximate Optimization Algorithm (QAOA) offer exponential speedups. Research has already shown that QAOA can reduce travel time in logistics operations by up to 30% compared to classical methods, a figure that represents billions in potential savings across the industry.

Another strong signal is the activity of competitors and adjacent industries. Major players like DHL, Volkswagen, and Airbus are not waiting; they are actively running quantum optimization pilots. Monitoring patent filings also provides a leading indicator of where commercial applications are heading. In the last year alone, patents have been filed outlining specific systems to translate supply-demand and routing challenges into quantum-optimized solutions. If your competitors are building quantum capabilities, you are already behind.

Your Quantum Disruption Readiness Checklist

- Problem Classification: Formally identify and list the core logistics problems in your operations (e.g., vehicle routing, cargo loading) that are classified as NP-hard and grow exponentially in complexity with more variables.

- ROI Impact Analysis: Calculate the potential financial impact of a 15-30% efficiency gain in these NP-hard problem areas. This defines the business case for quantum investment.

- Competitive & Patent Monitoring: Establish a process to track quantum pilot programs and patent filings from direct competitors and logistics leaders (e.g., DHL, Ford) to gauge industry adoption speed.

- Algorithm Maturity Tracking: Shift focus from hardware news (qubit counts) to tracking the development of specific quantum algorithms (like QAOA, VQE) relevant to your classified problems.

- Data Infrastructure Audit: Assess your current data collection and storage. Is the data required for your optimization problems clean, accessible, and structured for a potential quantum cloud environment?

IBM Q or Google Sycamore: Which Quantum Cloud Service Suits Research?

Once you’ve identified a need, the next practical question is where to begin experimenting. Accessing quantum computers no longer requires building a lab; it’s available via the cloud. Several major players dominate this emerging market, each with a different strategic focus that suits different business needs. For a logistics strategist, the choice isn’t about picking the “best” platform, but the one that aligns with your current technical capabilities and long-term goals. The main contenders—IBM, Google, AWS, and D-Wave—offer distinct approaches to quantum access.

IBM has focused on building an enterprise-ready ecosystem. As the first to put real quantum hardware on the cloud, its IBM Quantum platform is mature and well-documented, making it a strong choice for companies already integrated into the IBM cloud ecosystem. A key strength is the IBM Quantum Network, which includes over 210 partners from Fortune 500 companies to universities, all experimenting with the technology to solve real-world problems. This provides a rich community and knowledge base.

Google Quantum AI, home of the Sycamore and Willow chips, is geared more towards cutting-edge research. It provides access to high-performance superconducting processors and is ideal for teams with deep technical expertise looking to develop novel algorithms or push the boundaries of quantum error correction. Other platforms like Amazon Braket offer a multi-vendor approach, allowing users to test algorithms on hardware from different providers (like IonQ, Rigetti) through a single interface, which is excellent for comparative research. D-Wave, a pioneer in quantum annealing, is specifically designed for optimization problems and has been used in logistics projects for years.

This table summarizes the strategic positioning of the major quantum cloud providers, helping you align their offerings with your company’s research and development goals.

| Platform | Focus | Best For | Key Features |

|---|---|---|---|

| IBM Quantum | Enterprise-ready solutions | Companies already using IBM cloud | First to put real quantum hardware on the cloud and has steadily built a global ecosystem (IBM Quantum Network). |

| Google Quantum AI | Cutting-edge research | Advanced algorithm development | Access to Google’s superconducting quantum processors (the same technology behind Google’s Sycamore and the newer Willow chip). Google’s focus has been on pushing qubit performance and researching error correction. |

| AWS Braket | Multi-vendor access | Exploring different hardware | Access to multiple quantum processors from different vendors. |

| D-Wave Leap | Optimization problems | Logistics and scheduling | As this comparative analysis highlights, it has been used for logistics and scheduling solutions in real-world projects. |

The Cryogenic Constraint That Keeps Quantum Computers Out of Offices

One of the most enduring images of quantum computing is the complex chandelier of wires and tubes housed within a large cryogenic refrigerator. Superconducting qubits, the type used by Google and IBM, must be kept at temperatures colder than deep space (around 15 millikelvin) to maintain their fragile quantum states and prevent “decoherence”—the loss of quantum information due to environmental noise. This has led to the common belief that the primary barrier to widespread adoption is this massive, expensive hardware requirement. Many assume quantum computers will always be confined to specialized data centers.

While the cryogenic constraint is a real engineering challenge, focusing on it is a strategic misstep. Firstly, alternative quantum computing architectures that don’t require extreme cold are rapidly advancing. Photonic quantum computers, which use particles of light as qubits, can operate at room temperature. These systems are becoming more powerful and could lead to more compact and accessible hardware, as visualized in the conceptual image below.

This image illustrates a future where quantum processing isn’t hidden away in a cryogenic facility but is integrated into more conventional environments. But more importantly, the cloud model makes the physical location of the hardware irrelevant for the end-user. The real bottleneck for a logistics strategist is not hardware temperature, but data logistics. As one industry report astutely notes, the immediate hurdle is operational, not physical.

The real constraints for logistics are data-related: the security risks and latency of sending massive, proprietary logistics datasets to a quantum cloud provider for processing.

– Industry Analysis, Quantum Computing in Logistics Report

This is the critical insight. Your focus should not be on when a quantum computer will fit in your office, but on how you will build a secure, low-latency data pipeline to a quantum cloud provider. This involves data governance, encryption, and network architecture—problems you can and should be solving today.

When to Hire Quantum Developers: Signals That the Tech Is Mature Enough

For many strategists, the idea of hiring a “Quantum Developer” sounds like science fiction. However, the talent market is heating up far faster than most realize, and waiting too long could mean being locked out by demand. A recent analysis revealed a 450% increase in job postings for quantum-related roles, signaling a land grab for a very scarce talent pool. The key is not to hire blindly but to watch for clear, business-centric signals that indicate the technology is mature enough to justify the investment for your specific use case.

The first and most important signal is the maturation of quantum software libraries. Early quantum programming required deep knowledge of physics. Today, high-level libraries like IBM’s Qiskit or Google’s Cirq are abstracting away much of the underlying complexity. When you can hire a developer who can focus on translating your logistics problem into a quantum algorithm without needing a Ph.D. in quantum mechanics, the barrier to entry has significantly lowered. The demand is shifting from pure research scientists to quantum software engineers and algorithm developers.

Other signals are external and relate to the competitive landscape. When you see direct competitors in logistics not just announcing pilots, but publishing results with a clear ROI, the experimental phase is over. Likewise, the global talent pool itself is a signal. The number of graduates with quantum-relevant skills is growing, with forecasts suggesting the creation of around 250,000 new jobs in the sector. Investing in a small team to build in-house expertise can be a powerful strategic hedge, allowing you to evaluate vendor claims and rapidly deploy solutions when the time is right.

Key Hiring Signals for Quantum Investment:

- Signal 1: Maturing Software Libraries: Quantum libraries like Qiskit and Cirq become capable of abstracting away the complex physics, allowing developers to focus on problem formulation.

- Signal 2: Competitor Adoption: Tech giants and, more importantly, direct competitors in your industry begin reporting positive ROI from quantum optimization experiments in real-world applications.

- Signal 3: Growing Talent Availability: The global quantum talent pool is expanding, with universities producing more graduates with quantum information science skills. The Quantum Insider forecasts that the sector will create approximately 250,000 new jobs.

- Signal 4: Demonstrable ROI in Logistics: Major companies begin to consistently show positive, quantifiable returns from quantum pilots specifically in logistics optimization, moving beyond theoretical papers.

Why Electric Motors Deliver Peak Torque at Zero RPM?

To truly grasp the paradigm shift quantum computing represents for optimization, it helps to step away from computing and consider a more physical analogy: the electric motor versus the internal combustion engine (ICE). An ICE generates power through a series of controlled explosions, needing to build up speed (RPMs) to reach its peak torque and power. It’s a sequential, iterative process. It can’t deliver its full strength from a standstill. This is analogous to a classical computer tackling an optimization problem—it must iterate through many cycles and potential solutions, gradually “ramping up” as it searches the solution space.

An electric motor, by contrast, operates on a fundamentally different principle. Due to the nature of electromagnetism, it can deliver 100% of its available torque instantly, at zero RPM. There is no ramp-up. The power is immediate. This is the perfect analogy for how a quantum computer approaches an optimization problem. Thanks to superposition, it doesn’t need to iterate. It can, in a sense, engage with the entire problem space at once and converge on the optimal solution in a way that feels instantaneous compared to the classical grind.

As one industry comparison study eloquently puts it, this difference is at the heart of the quantum advantage.

Quantum computing is to classical computing what an electric motor is to an internal combustion engine – providing instant power versus gradual ramp-up.

– Quantum Computing Analogy, Industry Comparison Study

For a logistics strategist dealing with the TSP, this means a shift from planning based on “good enough” routes found after hours of computation to potentially having the single best route calculated in minutes or seconds. The operational implications are profound, enabling dynamic, real-time re-routing at a level of efficiency that is simply impossible with today’s “internal combustion” computational models. The “instant torque” of quantum computing allows a business to react to disruptions with optimal solutions, not just hasty patches.

Why Generative Models Predict Demand Better Than Traditional Statistics?

While the focus of quantum in logistics is often on routing optimization, its power will also supercharge other advanced technologies you are already exploring, most notably AI-driven demand forecasting. Traditional statistical methods, like moving averages or ARIMA models, are backward-looking. They excel at identifying patterns in historical data but struggle to predict outcomes when faced with unprecedented events or complex, non-linear correlations—a common occurrence in modern supply chains.

Generative AI models, like those used in large language models, represent a step-change. They learn the underlying distribution of the data, enabling them to generate new, synthetic-but-plausible scenarios. This allows them to predict demand not just based on what *has* happened, but on a rich spectrum of what *could* happen. However, even these powerful models are constrained by the classical computers they run on. As the number of variables (e.g., weather patterns, social media trends, competitor pricing, geopolitical events) increases, the computational load becomes immense.

This is where the synergy with quantum computing becomes a game-changer. Quantum algorithms can process and find correlations within these massive, high-dimensional datasets far more efficiently than any classical machine. By feeding the complex correlational analysis from a quantum processor into a generative AI model, we can create a hybrid system that is exponentially more powerful. This allows for demand forecasting that is not only more accurate but also more resilient and adaptive to black swan events.

The synergy between quantum processing and AI is a powerful combination for understanding complex, interconnected systems like global demand.

This hybrid approach is already being explored by industry leaders who see the immense potential in combining these two frontier technologies.

Case Study: Ford’s Quantum-Enhanced Demand Forecasting

Automaker Ford is actively using quantum computing to improve its demand forecasting models. By leveraging quantum algorithms to analyze a wider array of variables and their complex interdependencies, Ford aims to significantly enhance the accuracy of its forecasts. This leads directly to better inventory management, more efficient production planning, and a more resilient supply chain, demonstrating a clear, practical application of quantum-AI synergy.

Key Takeaways

- Quantum computing is a paradigm shift from sequential problem-solving to simultaneous exploration, ideal for NP-hard logistics challenges.

- The real immediate barrier is not hardware, but your organization’s data strategy, security posture, and ability to frame problems for quantum systems.

- The path to quantum readiness begins today by identifying core optimization problems, tracking algorithm maturity, and preparing for a new era of data security.

How Quantum Computing Threatens Current Complex Cryptographic Problems?

Beyond the promise of optimization, the immense power of quantum computing carries a critical, time-sensitive threat that every logistics strategist must address: the end of cryptography as we know it. The security of global commerce, from financial transactions to proprietary shipping manifests, relies on cryptographic problems like factoring large numbers (the basis of RSA encryption). These problems are intractable for classical computers but are trivial for a sufficiently powerful quantum computer running Shor’s algorithm.

This is not a distant, theoretical risk. The rapid advancement in quantum hardware means we are in a precarious period known as “harvest now, decrypt later.” Malicious actors can be capturing your encrypted logistics data today, storing it with the full expectation that they will be able to decrypt it with a future quantum computer. For a logistics company, this could mean competitors gaining access to pricing strategies, customer lists, and optimal routes, or state actors disrupting critical supply chains. The pace of progress is relentless; in just three years, as IBM notes, we’ve gone from 24 qubits to over 400, with 1,000+ qubit machines on the near horizon. The window to act is closing.

The solution is a transition to Post-Quantum Cryptography (PQC)—a new generation of encryption algorithms believed to be resistant to attacks from both classical and quantum computers. This is not a simple software patch; it’s a fundamental infrastructure overhaul. For a logistics network with thousands of interconnected devices, from servers to IoT sensors on containers, this migration is a massive undertaking. It requires a complete inventory of all cryptographic systems and a phased plan for upgrading them. Waiting until the threat is imminent will be too late. The time to build “cryptographic agility” is now.

Action Plan: Your Post-Quantum Cryptography Migration

- Conduct a Crypto-Inventory: Create a comprehensive list of all systems in your supply chain that use encryption. This must include everything from backend servers and databases to mobile devices, fleet management systems, and IoT sensors on assets.

- Prioritize Data Protection: Identify and prioritize the most sensitive and long-lived data. Real-time fleet management data, customer contracts, and strategic routing plans should be the first targets for PQC protection.

- Begin PQC Algorithm Testing: Start testing NIST-approved post-quantum cryptography algorithms on non-critical logistics applications to understand their performance overhead and integration challenges.

- Assess Performance Impact: Evaluate the computational and latency overhead of PQC algorithms on your existing infrastructure, particularly on low-power IoT devices where performance is critical.

- Develop a Migration Timeline: Create a formal, multi-year migration timeline that is aligned with the latest quantum threat assessments from security agencies and industry groups.

The journey into the quantum era of logistics is not about waiting for a perfect, turnkey solution. It is about making strategic decisions today. By reframing your most difficult challenges, building data agility, and proactively addressing cryptographic vulnerabilities, you can position your organization not merely to survive the quantum disruption, but to lead it. The next step is to initiate the crypto-inventory and problem classification within your own operations.